My AI dev workflow - building the right thing

With coding agents building "something" is free now. Everyone reports on one shotting a new project every day. But building the "right" thing is still very hard work. This post describes how my workflow changed with AI and how I build the right thing and have fun along the way.

Many devs starting out with agents type a prompt, sometimes use plan mode, fix errors, repeat the process. Or go all in with ralph loops, "superpower" skill workflows or elaborate mcp setups. The outcome is often an overly complex nightmare or process or total mental overload with whats going on.

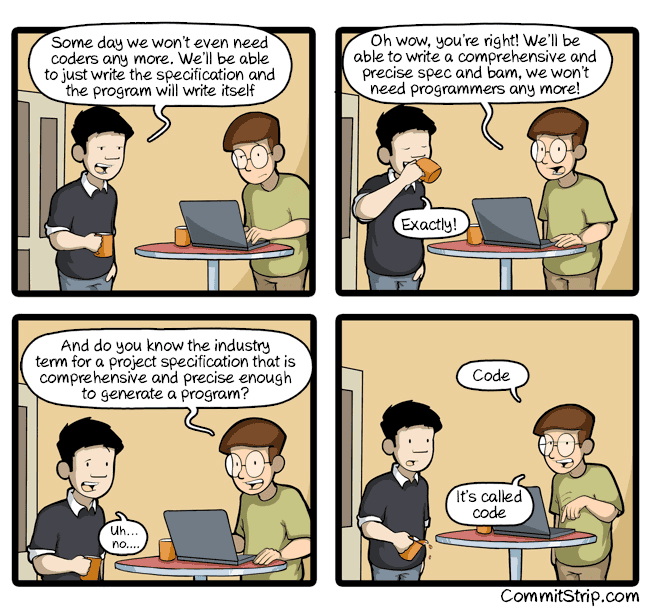

If you feed AI garbage or unprecise requirements, this missing clarity will directly translate into unclear results. You build something. It will just not be the right thing. There is no way around doing the hard work and discovering exactly what needs to be done. I settled on a simple, but very efficient workflow to get things done: putting in the hard work of writing the specs.

Organising Requirements

The workflow I use is extremely simple at its core, no magic step involved, no fancy framework needed: Iterating on requirements until they are crystal clear. Iterating on tests until they are crystal clear. Iterating on code until its crystal clear. I never let Claude write any code until I have a carefully reviewed plan.

For each feature I work on I always create a docs/{date}-{ticket}/requirements.md document. I feed Claude all input related to that task:

- related code

- documentation

- ticket details

- summary documents of the whole project

- my thoughts, how I want to approach it

Then I let Claude fill that document. It depends on the task at hand how the document exactly looks like, but lets say for adding a new API to a webservice:

- endpoints

- query parameter, exact behaviour of those parameters

- response shape (depending on parameters)

- details of all the returned fields

- auth requirements of the API

- error responses

- all the e2e tests I want to have

- first entrypoints where to add this in the codebase

- things out of scope

- progress in implementing this

Here is a trimmed example of what such a requirements.md looks like for adding a new read API to a webservice:

Example requirements.md (trimmed down **a lot**)

# Product Attribute Selector API

## Progress

| Phase | Status |

|-------|--------|

| Phase 1: Design | DONE |

| Phase 2: Specify | DONE |

| Phase 3: Implement | IN PROGRESS |

## Summary

A new read-only endpoint that returns a lightweight attribute matrix

for all variants of a product. Designed for product detail pages with

10k+ variants. Returns only the requested attributes and minimal

availability data per variant.

## REST API Specification

### Endpoints

GET /products/{productId}/variant-attributes

GET /products/key={productKey}/variant-attributes

### Query Parameters

| Parameter | Required | Default | Description |

|------------------------|----------|---------|------------------------------------------|

| `filter[attributes]` | Yes | - | Attribute names to include (repeatable) |

| `staged` | No | `false` | `true` returns draft data |

| `localeProjection` | No | - | Filters localized values to these locales|

### Response Shape

{

"productId": "<uuid>",

"attributes": [

{ "name": "color", "label": { "en": "Color" }, "type": "enum" }

],

"variants": [

{

"id": "<uuid>",

"sku": "JACKET-RED-M",

"availability": { "isOnStock": true, "availableQuantity": 10 },

"attributes": [

{ "name": "color", "value": { "key": "red", "label": "Red" } }

]

}

]

}

### Error Handling

| Condition | HTTP Status |

|------------------------------|-------------|

| Missing `filter[attributes]` | 400 |

| Product not found | 404 |

| Insufficient scope | 403 |

## E2E Test Scenarios

1. Happy path: returns requested attributes for all variants

2. Non-existent attribute names are silently omitted

3. Missing filter[attributes] returns 400

4. staged=false returns only published variants

5. staged=true returns all variants including drafts

6. localeProjection filters localized values

7. Product not found returns 404

## Implementation Outline

1. Route registration (new HTTP service, parameter parsing)

2. Parameter validation (filter[attributes] required, staged default)

3. Authorization check (different scopes for staged vs published)

4. Service layer (resolve product, fetch attributes, build response)

5. Response serialization

## Out of Scope

- GraphQL implementation (separate ticket)

- Pagination (all variants returned at once, up to 10k)

- Price informationThis creates a first version of the requirements, next I am refining them.

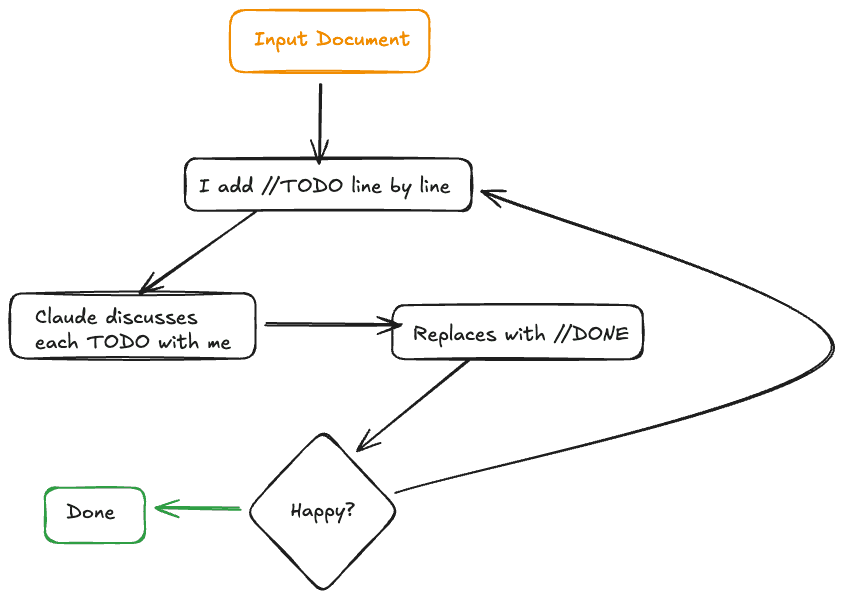

Iterating on Requirements - TODO loop

The first shot of the requirements are never sufficient, so now starts the phase that may just take the longest of all development.

I'll go through the requirements.md line by line and add TODO statements on everything I want to further discuss or change.

// TODO: we should also test that the `include` parameter can never be empty

## E2E scenarios

- ...As agents tend to sometimes just decide to ignore my TODOs, or silently drop them, I let Claude always address TODOs one by one and replace each fixed TODO with a DONE.

// DONE e2e tests now cover `include` can never be empty

## E2E scenarios

- test that missing `include` parameter will lead to 400 with error message: "..This process will take hours and is the most important part. This avoids the garbage in garbage out problem and this ensures later steps will be more mechanical.

So with that I have done a very, very in depth refinement of the feature. This helps me to build the theory and understanding of the current task. I am in control, I understand all that is needed and am perfectly capable of later reviewing any AI generated code. The creating of these detailed requirements was for me! As a side effect I now also have a great document for agents.

Source of truth - requirements.md

With that I have a crystal clear source of truth for the feature and this comes with some very nice benefits:

- context compaction is safe now, the plan is always there, easy to be re-read, progress is also tracked on it

- this doc can be referenced later again whenever I work on a related feature, its amazing documentation

- if multiple devs use this approach in a team this doc could also be checked in into version control and potentially even reviewed alongside the PR

And yes, these specs are almost as much work as writing the code, but that is the whole point, I need this to understand the feature. If I would not spend hours on it I would not understand it.

Writing tests

Next, for any non trivial feature, I usually want to encode the expected behaviour first in e2e tests. They change the least depending on implementation, as they see the system as a black box. For that I usually enter plan mode and let Claude create a plan how to write the e2e tests based on the doc.

But once the plan is written I do not just accept it. Again I enter into the TODO loop. I open Claudes plan file in an editor and review it line by line, adding TODOs, letting Claude address those TODOs one by one and replace them with "DONE".

Only then I let Claude write any code for the first time - the e2e test.

And you might have guessed it, I'll iterate on the tests again with a TODO flow.

The mechanical part: implementing

I love writing code, so usually with me having very clear understanding of the feature, having a e2e suite I can rely on, I want to have some fun and I'll write most of the code. This might look like me writing the happy path and letting Claude fill in some gaps or less interesting parts. If Claude writes code, it will, for sure, be going through the TODO flow!

This can be different per task again, sometimes I write more, sometimes Claude writes more.

Implementation is usually pretty smooth now though. But there is always the chance that I'll discover something new and update the requirements.md on the way. It is not set in stone.

Lastly I also let another agent, without context of the implementation, review all the work done by comparing it against the requirements.md.

Summary

So what does that all give me? I think AI helps me be a better developer. I use AI to enhance my refinement of the task at hand. I use AI to do the boring tasks for me and I can still do the fun parts.

The work just shifted, the up front refinement takes way more time than before, but this helps building the right thing! The in depth specs are not necessarily time saving - but my work is of higher quality! Less "discovering along the way", less writing boilerplate. And most importantly, I do not build something I barely understand, but I build the right thing.